🍡Correlation Analysis

Types of correlation, Karl Pearson's coefficient, Spearman's rank correlation, scatter diagrams, and significance testing with agricultural examples

Does more rainfall always mean higher crop yield? Does increasing fertiliser dose proportionally increase grain weight? These questions ask about the relationship between two variables — and correlation analysis is the statistical tool that measures the strength and direction of such relationships in agricultural research.

- When there are two continuous variables which are concomitant their joint distribution is known as bivariate normal distribution. The word concomitant here means the two variables occur together or change together — for instance, the height and weight of plants measured simultaneously.

- If there are more than two such variables their joint distribution is known as multivariate normal distributions. For example, if we measure plant height, number of tillers, and grain yield together, these three variables form a multivariate distribution.

- In case of bivariate or multivariate normal distributions, we may be interested in discovering and measuring the magnitude and direction of the relationship between two or more variables.

- For this purpose we use the statistical tool known as correlation. Correlation helps us answer the question: “Do these variables move together, and if so, how strongly?”

- Definition:

If the change in one variable affects a change in the other variable, the two variables are said to be correlated and the degree of association ship (or extent of the relationship) is known as correlation.

- It studies the relation or association between two variables.

- Two independent variables are not interrelated.

- The measurement of correlation is called the

correlation co-efficient (r)orcorrelation index, which summarizes in one figure the direction & degree of correlation. The correlation coefficient is a single number that captures both how strong and in which direction the relationship between two variables is.

- Range of correlation varies between +1 to -1 (i.e. —1 ≤ r ≤ 1). The correlation coefficient never exceeds unity.

- If r = +1 then we say that there is a perfect positive correlation between x and y. All data points fall exactly on an upward-sloping straight line.

- If r = -1 then we say that there is a perfect negative correlation between x and y. All data points fall exactly on a downward-sloping straight line.

- If r = 0 then the two variables x and y are called uncorrelated variables. There is no linear relationship between them.

- No unit of measurement. The correlation coefficient is a pure number (dimensionless), meaning it does not depend on the units in which the variables are measured. Whether you measure yield in kg/ha or quintals/ha, the correlation coefficient remains the same.

Types of Correlation

Positive

- If the two variables deviate in the

same direction, i.e., if the increase (or decrease) in one variable results in a corresponding increase (or decrease) in the other variable, correlation is said to be direct or positive. - Ex:

- Heights and weights

- Household income and expenditure

- Amount of rainfall and yield of crops

- Prices and supply of commodities

- Feed and milk yield of an animal

- Soluble nitrogen and total chlorophyll in the leaves of paddy.

Negative correlation

- If the two variables constantly deviate in the

opposite directioni.e., if increase (or decrease) in one variable results in corresponding decrease (or increase) in the other variable, correlation is said to be inverse or negative. - Ex:

- Price and demand of a goods

- Volume and pressure of perfect gas

- Sales of woolen garments and the day temperature

- Yield of crop and plant infestation

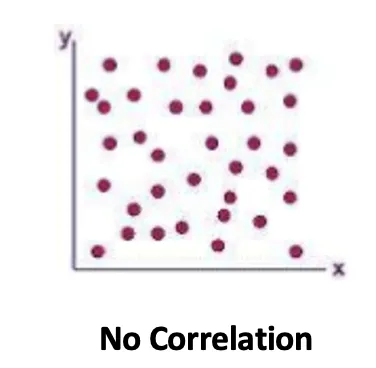

No or Zero Correlation

- If there is no relationship between the two variables such that the value of one variable change and the other variable remain constant is called no or zero correlation. In this case, knowing the value of one variable provides no useful information for predicting the value of the other.

Simple, Partial and Multiple Correlations

- Simple correlation: When only two variables are studied. For example, studying the relationship between fertilizer dose and crop yield alone.

- Partial correlation: More than two variables are studied but consider only two to be influencing each other, the effect of other influencing variable being kept constant. For example, studying the relationship between fertilizer and yield while holding rainfall constant.

- Multiple correlations: Three or more variable are studied simultaneously. This gives a comprehensive picture of how several variables together relate to a response variable.

Linear and Nonlinear Correlation

- If the amount of change in one variable tends to bear a

constant ratioto the amount of change in the other variable is known as linear correlation. Graphically, this relationship plots as a straight line. - If the amount of change in variable doesn’t bear a constant ratio to the amount of change in other variable is known as nonlinear correlation. The relationship curve may be parabolic, exponential, or of some other form.

- In the most of the practical situations we find a nonlinear relationship between variables. For instance, crop yield does not increase indefinitely with fertilizer — beyond an optimum point, additional fertilizer may actually reduce yield, creating a curvilinear relationship.

- Absence of any relationship between the variable the value of correlation coefficient will be zero.

Methods of studying Correlation

- Scatter Diagram

- Karl Pearson’s Coefficient of Correlation

- Spearman’s Rank Correlation

- Regression Lines

Each method has its own strengths: the scatter diagram is visual and intuitive, Karl Pearson’s method gives a precise numerical measure for linear relationships, Spearman’s method works with ranked data, and regression lines help in prediction.

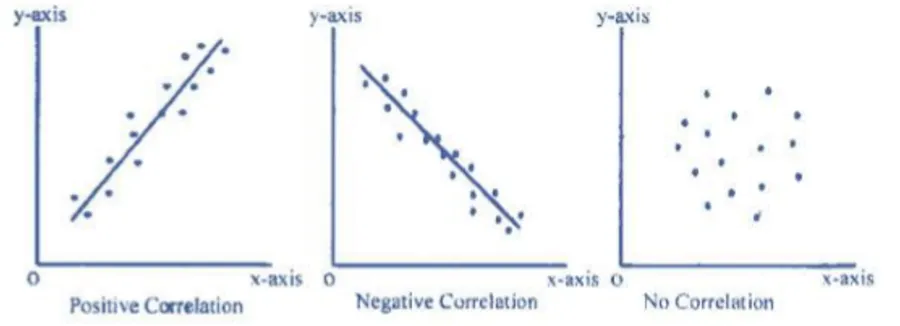

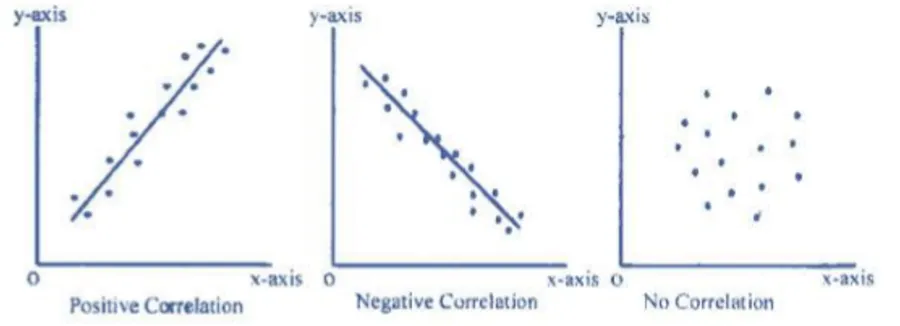

Scatter diagram

- It is the simplest way of the diagrammatic representation of bivariate data. Thus for the bivariate distribution (xi, yi); i = j = 1,2,…n, If the values of the variables X and Y be plotted along the X-axis and Y-axis respectively in the xy-plane, the diagram of dots so obtained is known as scatter diagram.

- From the scatter diagram, if the points are very close to each other, we should expect a fairly good amount of correlation between the variables and if the points are widely scattered, a poor correlation is expected. This method, however, is not suitable if the number of observations is fairly large. While not precise, the scatter diagram gives a quick visual impression of the nature and strength of the relationship.

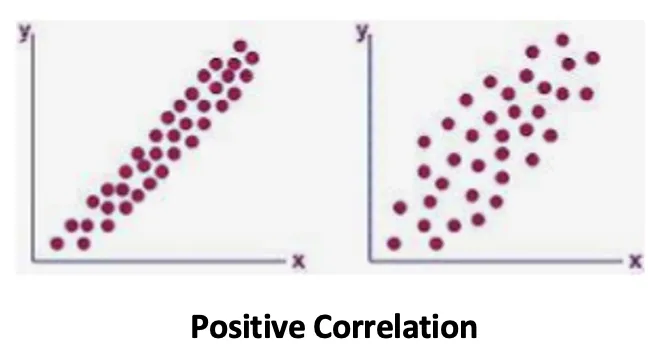

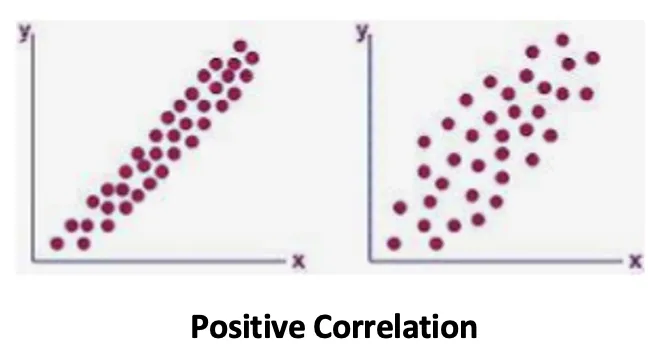

Positive Correlation

- If the plotted points shows an upward trend of a straight line then we say that both the variables are positively correlated.

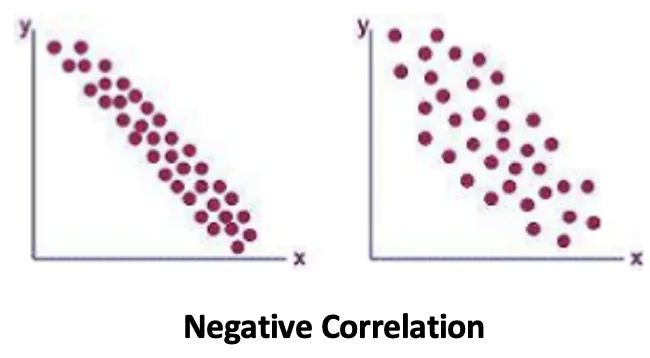

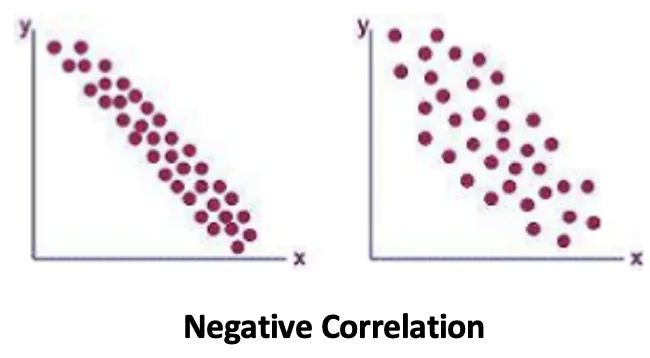

Negative Correlation

- When the plotted points shows a downward trend of a straight line then we say that both the variables are negatively correlated.

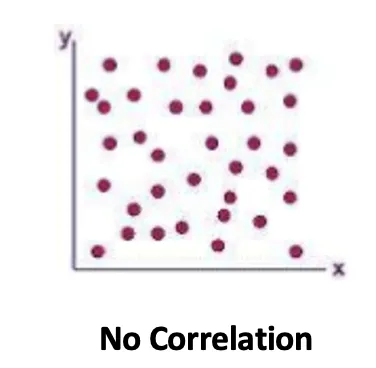

No Correlation

- If the plotted points spread on whole of the graph sheet, then we say that both the variables are not correlated.

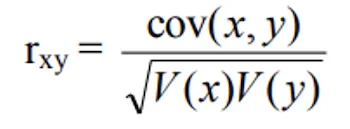

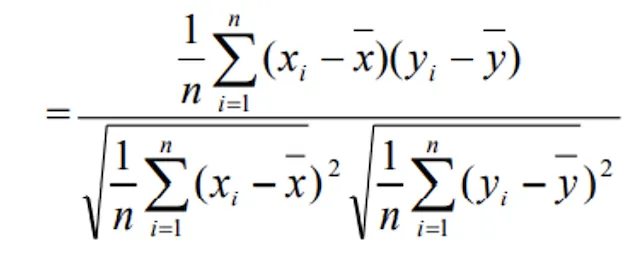

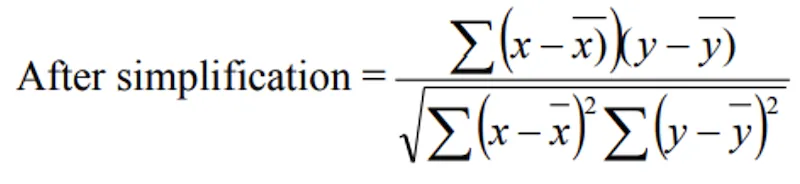

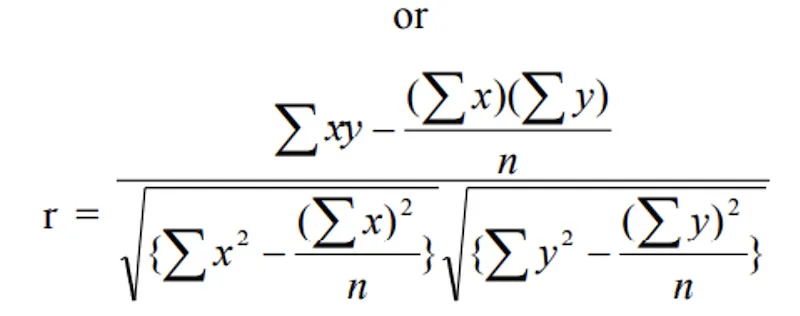

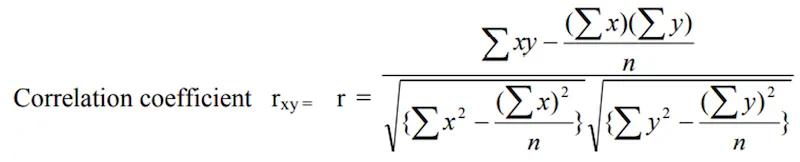

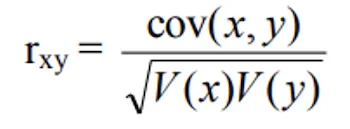

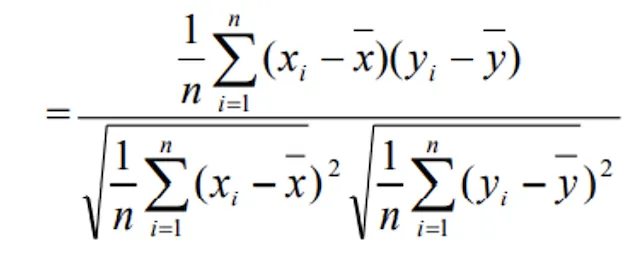

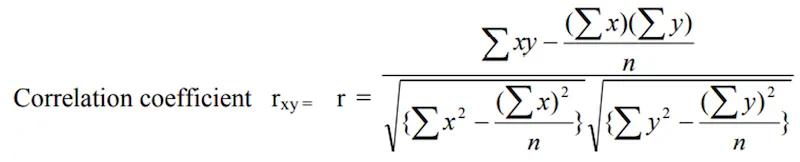

Karl Pearson’s Coefficient of Correlation

- Prof. Karl Pearson, a British Biometrician suggested a measure of correlation between two variables. It is known as Karl Pearson’s coefficient of correlation. It is useful for

measuring the degree of linear relationshipbetween the two variables X and Y. This is the most widely used method for computing correlation in agricultural and biological research. - It is usually denoted by rxy or ‘r’.

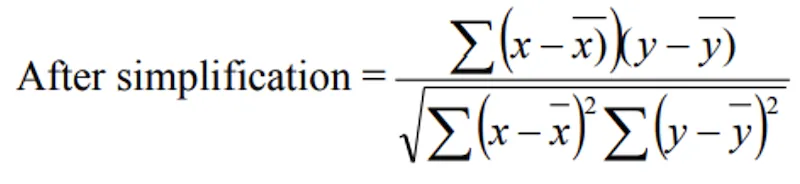

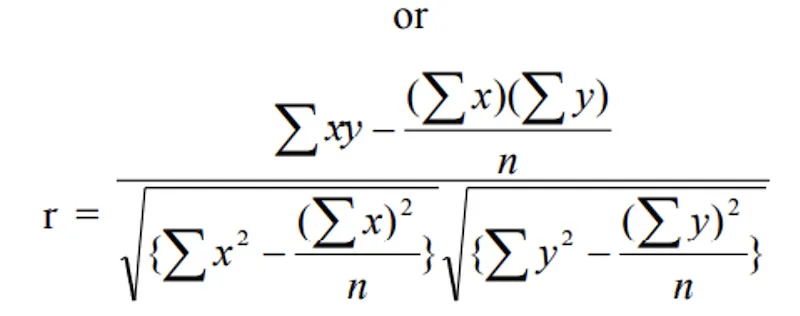

i) Direct Method:

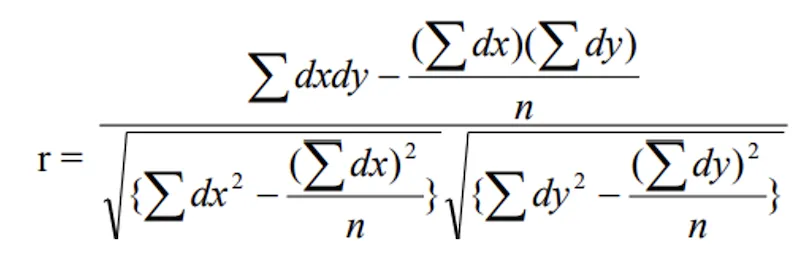

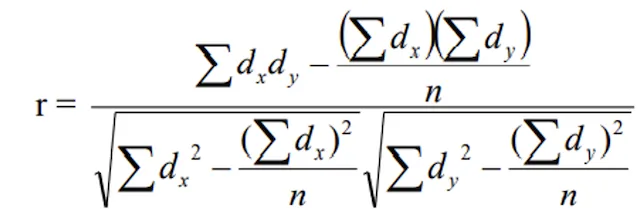

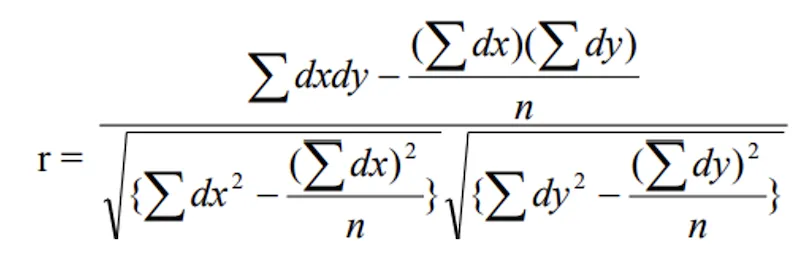

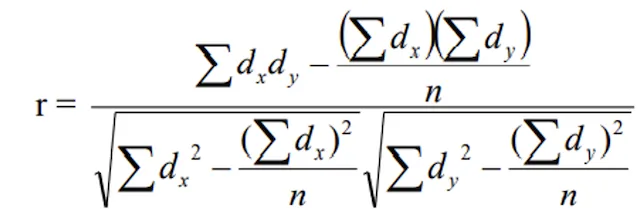

ii) Deviation method

- Where

- σx = S.D. of x and σy = S.D. of Y

- n = number of items

- dx = x - A, dy = y - B

- A = assumed value of and B = assumed value of y

The deviation method simplifies calculations by using assumed means (A and B) to reduce the size of numbers being worked with. This was especially useful before calculators and computers became widespread.

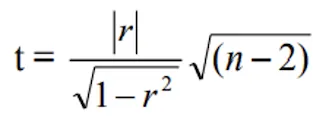

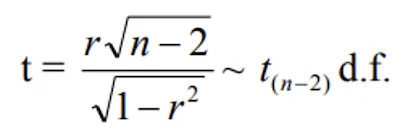

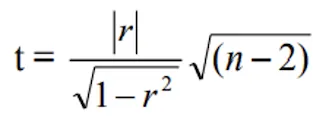

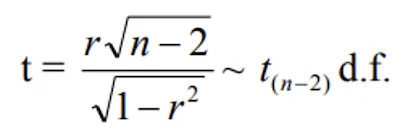

Test for significance of correlation coefficient

- If “r” is the observed correlation coefficient in a sample of “n” pairs of observations from a bivariate normal population, then Prof. Fisher proved that under the null hypothesis

H0: ρ = 0

This null hypothesis states that the population correlation coefficient (denoted by the Greek letter rho, ρ) is zero, meaning there is no true linear relationship between the two variables in the population. The sample correlation “r” that we compute could simply be due to sampling fluctuation.

- The variables x, y follows a bivariate normal distribution. If the population correlation coefficient of x and y is denoted by ρ, then it is often of interest to test whether ρ is zero or different from zero, on the basis of observed correlation coefficient “r”.

- Thus if “r” is the sample correlation coefficient based on a sample of “n” observations, then the appropriate test statistic for testing the null hypothesis H0: ρ = 0 against the alternative hypothesis H1: ρ ≠ 0 is

- Follows Student’s t — distribution with (n - 2) d.f. The degrees of freedom are (n - 2) because two parameters (the means of X and Y) have been estimated from the data.

- If calculated value of t > table value of t with (n - 2) d.f. at specified level of significance, then the null hypothesis is rejected. That is, there may be significant correlation between the two variables. Otherwise, the null hypothesis is accepted.

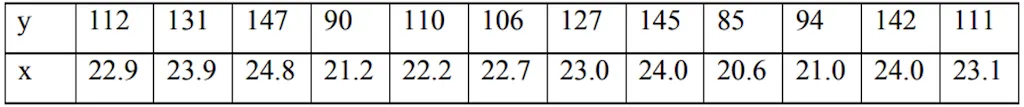

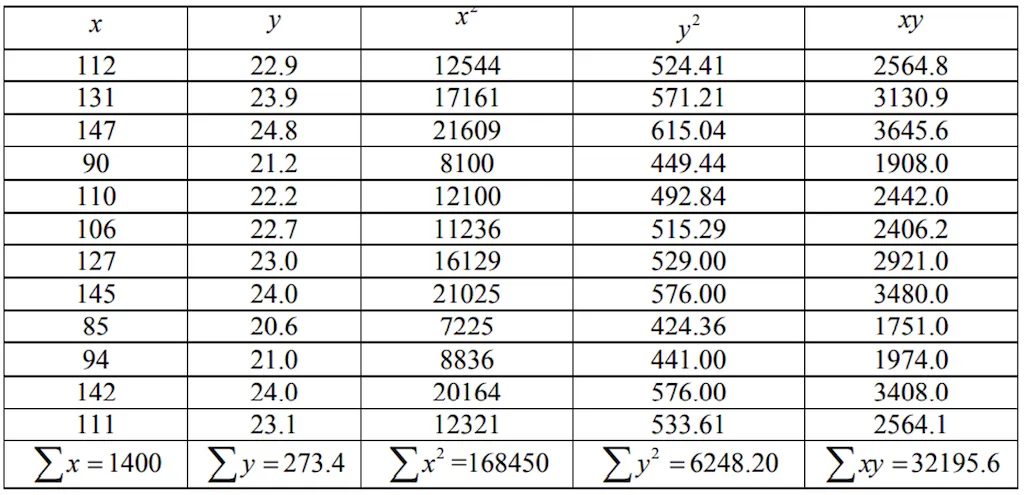

Example

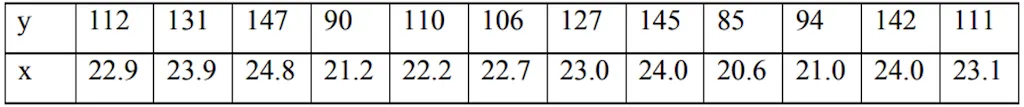

- From a paddy field, 12 plants were selected at random. The length of panicles in cm (x) and the number of grains per panicle (y) of the selected plants were recorded. The results are given in the following table. Calculate correlation coefficient and its testing.

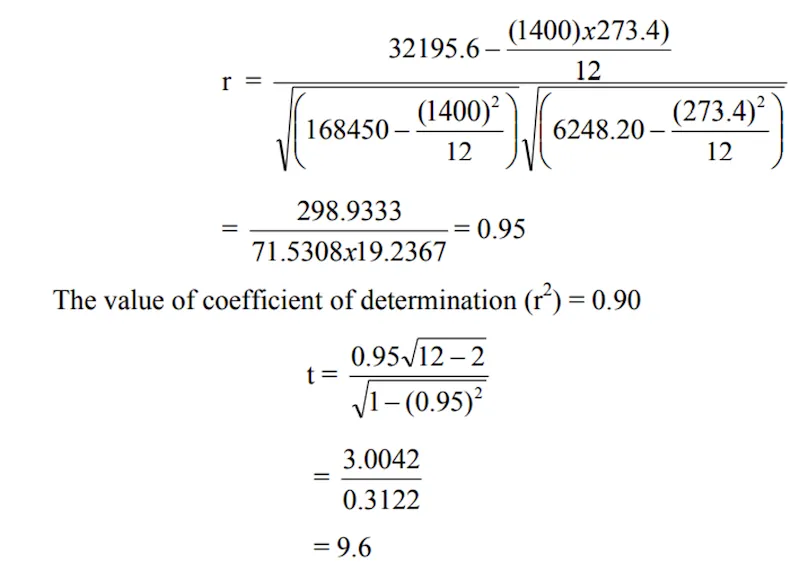

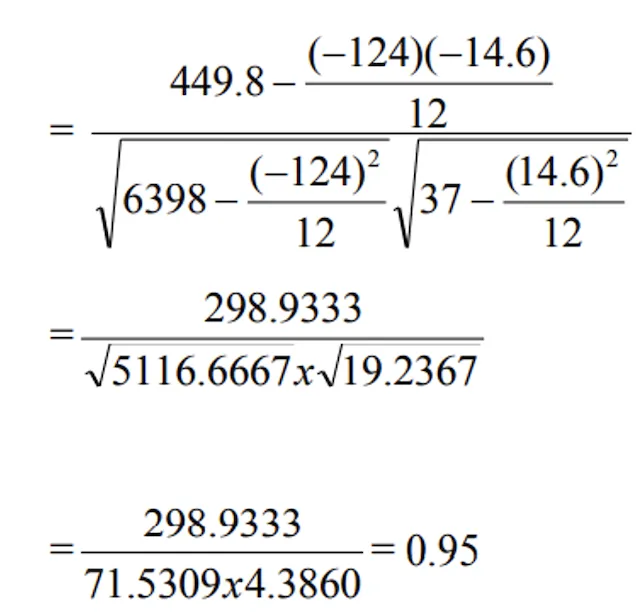

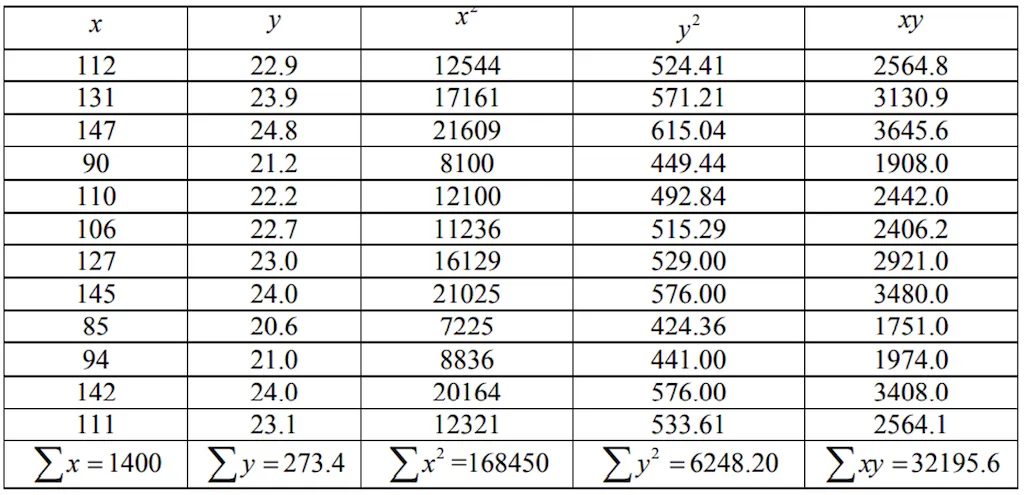

Solution:

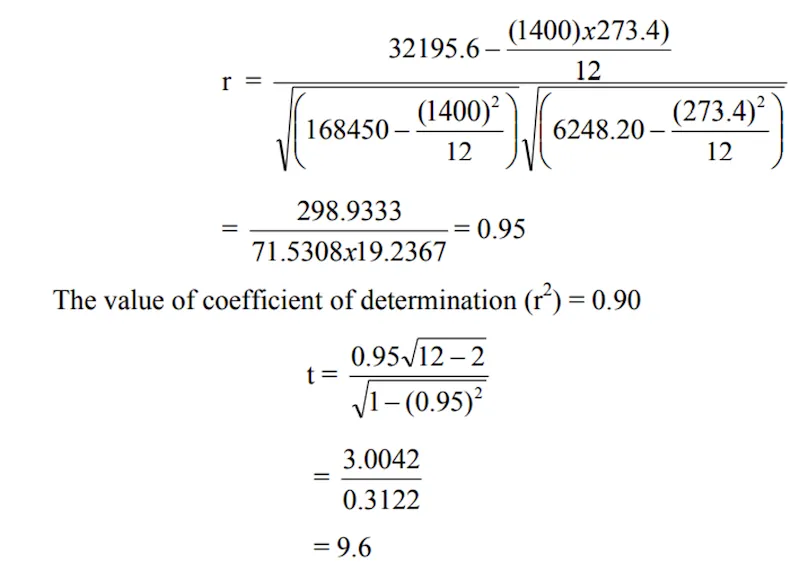

a) Direct Method:

- Where, n = number of observations

- Testing the correlation coefficient:

- Null hypothesis H0: Population correlation coefficient “ρ” = 0

- Under H0, the test statistic becomes

- T critical (table) value for 10 d.f. at 5% LOS is 2.23

- Since calculated value i.e. 9.6 is > t table value i.e. 2.23, it can be inferred that there exists significant positive correlation between (x, y). This means the relationship between panicle length and number of grains is not due to chance — longer panicles genuinely tend to have more grains.

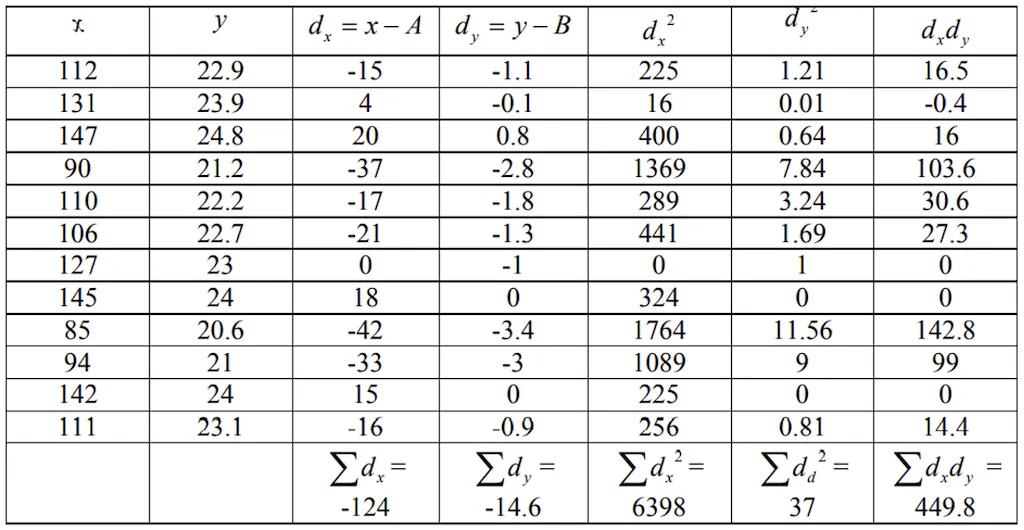

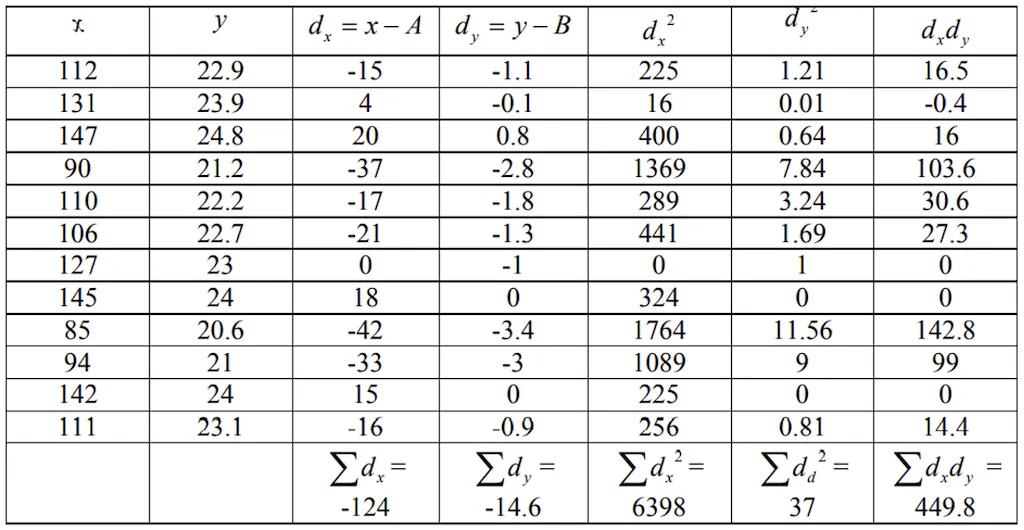

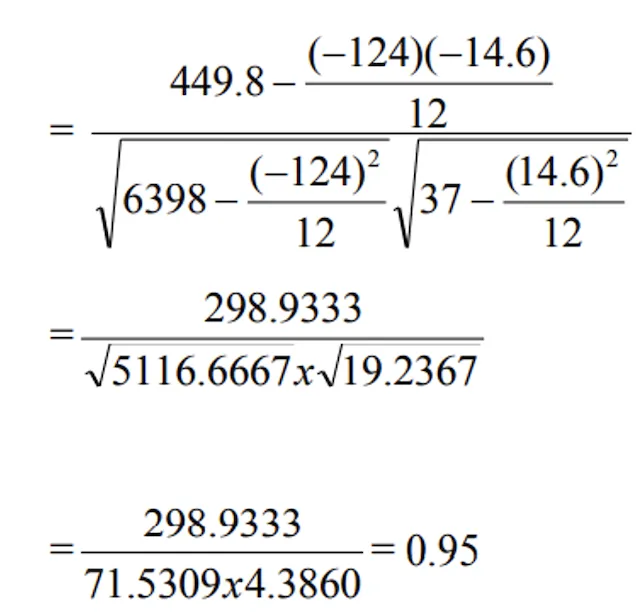

b) Indirect Method:

- Here A = 127 and B = 24

Summary Table

| Concept | Key Point | Exam Tip |

|---|---|---|

| Correlation coefficient (r) | Measures strength and direction of linear relationship | Range: -1 to +1; unit-free |

| r = +1 | Perfect positive correlation | All points on upward line |

| r = -1 | Perfect negative correlation | All points on downward line |

| r = 0 | No linear relationship | Variables are uncorrelated |

| Positive example | Rainfall and crop yield | Both increase together |

| Negative example | Price and demand | One up, other down |

| Simple correlation | Two variables only | Most common in exams |

| Partial correlation | Two variables, others held constant | Isolates one relationship |

| Multiple correlation | Three or more variables | Comprehensive analysis |

| Karl Pearson’s r | Most widely used method | For linear relationships |

| Test statistic | t = r√(n-2)/√(1-r²) | d.f. = n - 2 |

| Scatter diagram | Visual method | Quick impression of relationship |

TIP

Mnemonic for correlation types: “SiPMu” — Simple (2 variables), Partial (2 active, rest constant), Multiple (3+ variables).

Summary Cheat Sheet

| Concept / Topic | Key Details |

|---|---|

| Correlation coefficient (r) | Measures strength and direction of linear relationship |

| Range of r | -1 to +1; never exceeds unity; no unit (dimensionless) |

| r = +1 | Perfect positive correlation — all points on upward line |

| r = -1 | Perfect negative correlation — all points on downward line |

| r = 0 | Variables are uncorrelated — no linear relationship |

| Positive correlation | Both variables deviate in same direction (e.g., rainfall and yield) |

| Negative correlation | Variables deviate in opposite direction (e.g., price and demand) |

| Simple correlation | Only two variables studied |

| Partial correlation | Two variables studied; others held constant |

| Multiple correlation | Three or more variables studied simultaneously |

| Linear correlation | Change bears a constant ratio — plots as straight line |

| Nonlinear correlation | Change does not bear constant ratio — curved relationship |

| Scatter diagram | Simplest visual method for bivariate data |

| Karl Pearson’s r | Most widely used; measures degree of linear relationship |

| Bivariate normal | Joint distribution of two continuous concomitant variables |

| Test statistic for r | t = r√(n-2)/√(1-r²); follows t-distribution with (n-2) d.f. |

| Significance test | If t_calc > t_table → reject H₀ (significant correlation) |

| Direct method | Uses raw values of X and Y directly |

| Deviation method | Uses assumed means A and B to simplify calculations |

| Positive example | Heights-weights, feed-milk yield, rainfall-crop yield |

| Negative example | Price-demand, yield-pest infestation |

| Practical situations | Most relationships are nonlinear in practice |

Pro Content Locked

Upgrade to Pro to access this lesson and all other premium content.

₹2388 billed yearly

- All Agriculture & Banking Courses

- AI Lesson Questions (100/day)

- AI Doubt Solver (50/day)

- Glows & Grows Feedback (30/day)

- AI Section Quiz (20/day)

- 22-Language Translation (30/day)

- Recall Questions (20/day)

- AI Quiz (15/day)

- AI Quiz Paper Analysis

- AI Step-by-Step Explanations

- Spaced Repetition Recall (FSRS)

- AI Tutor

- Immersive Text Questions

- Audio Lessons — Hindi & English

- Mock Tests & Previous Year Papers

- Summary & Mind Maps

- XP, Levels, Leaderboard & Badges

- Generate New Classrooms

- Voice AI Teacher (AgriDots Live)

- AI Revision Assistant

- Knowledge Gap Analysis

- Interactive Revision (LangGraph)

🔒 Secure via Razorpay · Cancel anytime · No hidden fees

Does more rainfall always mean higher crop yield? Does increasing fertiliser dose proportionally increase grain weight? These questions ask about the relationship between two variables — and correlation analysis is the statistical tool that measures the strength and direction of such relationships in agricultural research.

- When there are two continuous variables which are concomitant their joint distribution is known as bivariate normal distribution. The word concomitant here means the two variables occur together or change together — for instance, the height and weight of plants measured simultaneously.

- If there are more than two such variables their joint distribution is known as multivariate normal distributions. For example, if we measure plant height, number of tillers, and grain yield together, these three variables form a multivariate distribution.

- In case of bivariate or multivariate normal distributions, we may be interested in discovering and measuring the magnitude and direction of the relationship between two or more variables.

- For this purpose we use the statistical tool known as correlation. Correlation helps us answer the question: “Do these variables move together, and if so, how strongly?”

- Definition:

If the change in one variable affects a change in the other variable, the two variables are said to be correlated and the degree of association ship (or extent of the relationship) is known as correlation.

- It studies the relation or association between two variables.

- Two independent variables are not interrelated.

- The measurement of correlation is called the

correlation co-efficient (r)orcorrelation index, which summarizes in one figure the direction & degree of correlation. The correlation coefficient is a single number that captures both how strong and in which direction the relationship between two variables is.

- Range of correlation varies between +1 to -1 (i.e. —1 ≤ r ≤ 1). The correlation coefficient never exceeds unity.

- If r = +1 then we say that there is a perfect positive correlation between x and y. All data points fall exactly on an upward-sloping straight line.

- If r = -1 then we say that there is a perfect negative correlation between x and y. All data points fall exactly on a downward-sloping straight line.

- If r = 0 then the two variables x and y are called uncorrelated variables. There is no linear relationship between them.

- No unit of measurement. The correlation coefficient is a pure number (dimensionless), meaning it does not depend on the units in which the variables are measured. Whether you measure yield in kg/ha or quintals/ha, the correlation coefficient remains the same.

Types of Correlation

Positive

- If the two variables deviate in the

same direction, i.e., if the increase (or decrease) in one variable results in a corresponding increase (or decrease) in the other variable, correlation is said to be direct or positive. - Ex:

- Heights and weights

- Household income and expenditure

- Amount of rainfall and yield of crops

- Prices and supply of commodities

- Feed and milk yield of an animal

- Soluble nitrogen and total chlorophyll in the leaves of paddy.

Negative correlation

- If the two variables constantly deviate in the

opposite directioni.e., if increase (or decrease) in one variable results in corresponding decrease (or increase) in the other variable, correlation is said to be inverse or negative. - Ex:

- Price and demand of a goods

- Volume and pressure of perfect gas

- Sales of woolen garments and the day temperature

- Yield of crop and plant infestation

No or Zero Correlation

- If there is no relationship between the two variables such that the value of one variable change and the other variable remain constant is called no or zero correlation. In this case, knowing the value of one variable provides no useful information for predicting the value of the other.

Simple, Partial and Multiple Correlations

- Simple correlation: When only two variables are studied. For example, studying the relationship between fertilizer dose and crop yield alone.

- Partial correlation: More than two variables are studied but consider only two to be influencing each other, the effect of other influencing variable being kept constant. For example, studying the relationship between fertilizer and yield while holding rainfall constant.

- Multiple correlations: Three or more variable are studied simultaneously. This gives a comprehensive picture of how several variables together relate to a response variable.

Linear and Nonlinear Correlation

- If the amount of change in one variable tends to bear a

constant ratioto the amount of change in the other variable is known as linear correlation. Graphically, this relationship plots as a straight line. - If the amount of change in variable doesn’t bear a constant ratio to the amount of change in other variable is known as nonlinear correlation. The relationship curve may be parabolic, exponential, or of some other form.

- In the most of the practical situations we find a nonlinear relationship between variables. For instance, crop yield does not increase indefinitely with fertilizer — beyond an optimum point, additional fertilizer may actually reduce yield, creating a curvilinear relationship.

- Absence of any relationship between the variable the value of correlation coefficient will be zero.

Methods of studying Correlation

- Scatter Diagram

- Karl Pearson’s Coefficient of Correlation

- Spearman’s Rank Correlation

- Regression Lines

Each method has its own strengths: the scatter diagram is visual and intuitive, Karl Pearson’s method gives a precise numerical measure for linear relationships, Spearman’s method works with ranked data, and regression lines help in prediction.

Scatter diagram

- It is the simplest way of the diagrammatic representation of bivariate data. Thus for the bivariate distribution (xi, yi); i = j = 1,2,…n, If the values of the variables X and Y be plotted along the X-axis and Y-axis respectively in the xy-plane, the diagram of dots so obtained is known as scatter diagram.

- From the scatter diagram, if the points are very close to each other, we should expect a fairly good amount of correlation between the variables and if the points are widely scattered, a poor correlation is expected. This method, however, is not suitable if the number of observations is fairly large. While not precise, the scatter diagram gives a quick visual impression of the nature and strength of the relationship.

Positive Correlation

- If the plotted points shows an upward trend of a straight line then we say that both the variables are positively correlated.

Negative Correlation

- When the plotted points shows a downward trend of a straight line then we say that both the variables are negatively correlated.

No Correlation

- If the plotted points spread on whole of the graph sheet, then we say that both the variables are not correlated.

Karl Pearson’s Coefficient of Correlation

- Prof. Karl Pearson, a British Biometrician suggested a measure of correlation between two variables. It is known as Karl Pearson’s coefficient of correlation. It is useful for

measuring the degree of linear relationshipbetween the two variables X and Y. This is the most widely used method for computing correlation in agricultural and biological research. - It is usually denoted by rxy or ‘r’.

i) Direct Method:

ii) Deviation method

- Where

- σx = S.D. of x and σy = S.D. of Y

- n = number of items

- dx = x - A, dy = y - B

- A = assumed value of and B = assumed value of y

The deviation method simplifies calculations by using assumed means (A and B) to reduce the size of numbers being worked with. This was especially useful before calculators and computers became widespread.

Test for significance of correlation coefficient

- If “r” is the observed correlation coefficient in a sample of “n” pairs of observations from a bivariate normal population, then Prof. Fisher proved that under the null hypothesis

H0: ρ = 0

This null hypothesis states that the population correlation coefficient (denoted by the Greek letter rho, ρ) is zero, meaning there is no true linear relationship between the two variables in the population. The sample correlation “r” that we compute could simply be due to sampling fluctuation.

- The variables x, y follows a bivariate normal distribution. If the population correlation coefficient of x and y is denoted by ρ, then it is often of interest to test whether ρ is zero or different from zero, on the basis of observed correlation coefficient “r”.

- Thus if “r” is the sample correlation coefficient based on a sample of “n” observations, then the appropriate test statistic for testing the null hypothesis H0: ρ = 0 against the alternative hypothesis H1: ρ ≠ 0 is

- Follows Student’s t — distribution with (n - 2) d.f. The degrees of freedom are (n - 2) because two parameters (the means of X and Y) have been estimated from the data.

- If calculated value of t > table value of t with (n - 2) d.f. at specified level of significance, then the null hypothesis is rejected. That is, there may be significant correlation between the two variables. Otherwise, the null hypothesis is accepted.

Example

- From a paddy field, 12 plants were selected at random. The length of panicles in cm (x) and the number of grains per panicle (y) of the selected plants were recorded. The results are given in the following table. Calculate correlation coefficient and its testing.

Solution:

a) Direct Method:

- Where, n = number of observations

- Testing the correlation coefficient:

- Null hypothesis H0: Population correlation coefficient “ρ” = 0

- Under H0, the test statistic becomes

- T critical (table) value for 10 d.f. at 5% LOS is 2.23

- Since calculated value i.e. 9.6 is > t table value i.e. 2.23, it can be inferred that there exists significant positive correlation between (x, y). This means the relationship between panicle length and number of grains is not due to chance — longer panicles genuinely tend to have more grains.

b) Indirect Method:

- Here A = 127 and B = 24

Summary Table

| Concept | Key Point | Exam Tip |

|---|---|---|

| Correlation coefficient (r) | Measures strength and direction of linear relationship | Range: -1 to +1; unit-free |

| r = +1 | Perfect positive correlation | All points on upward line |

| r = -1 | Perfect negative correlation | All points on downward line |

| r = 0 | No linear relationship | Variables are uncorrelated |

| Positive example | Rainfall and crop yield | Both increase together |

| Negative example | Price and demand | One up, other down |

| Simple correlation | Two variables only | Most common in exams |

| Partial correlation | Two variables, others held constant | Isolates one relationship |

| Multiple correlation | Three or more variables | Comprehensive analysis |

| Karl Pearson’s r | Most widely used method | For linear relationships |

| Test statistic | t = r√(n-2)/√(1-r²) | d.f. = n - 2 |

| Scatter diagram | Visual method | Quick impression of relationship |

TIP

Mnemonic for correlation types: “SiPMu” — Simple (2 variables), Partial (2 active, rest constant), Multiple (3+ variables).

Summary Cheat Sheet

| Concept / Topic | Key Details |

|---|---|

| Correlation coefficient (r) | Measures strength and direction of linear relationship |

| Range of r | -1 to +1; never exceeds unity; no unit (dimensionless) |

| r = +1 | Perfect positive correlation — all points on upward line |

| r = -1 | Perfect negative correlation — all points on downward line |

| r = 0 | Variables are uncorrelated — no linear relationship |

| Positive correlation | Both variables deviate in same direction (e.g., rainfall and yield) |

| Negative correlation | Variables deviate in opposite direction (e.g., price and demand) |

| Simple correlation | Only two variables studied |

| Partial correlation | Two variables studied; others held constant |

| Multiple correlation | Three or more variables studied simultaneously |

| Linear correlation | Change bears a constant ratio — plots as straight line |

| Nonlinear correlation | Change does not bear constant ratio — curved relationship |

| Scatter diagram | Simplest visual method for bivariate data |

| Karl Pearson’s r | Most widely used; measures degree of linear relationship |

| Bivariate normal | Joint distribution of two continuous concomitant variables |

| Test statistic for r | t = r√(n-2)/√(1-r²); follows t-distribution with (n-2) d.f. |

| Significance test | If t_calc > t_table → reject H₀ (significant correlation) |

| Direct method | Uses raw values of X and Y directly |

| Deviation method | Uses assumed means A and B to simplify calculations |

| Positive example | Heights-weights, feed-milk yield, rainfall-crop yield |

| Negative example | Price-demand, yield-pest infestation |

| Practical situations | Most relationships are nonlinear in practice |

Knowledge Check

Take a dynamically generated quiz based on the material you just read to test your understanding and get personalized feedback.

Lesson Doubts

Ask questions, get expert answers